AI teams up with data encryption

Allow your AI and ML models to be encrypted and to take advantage of secure computing.

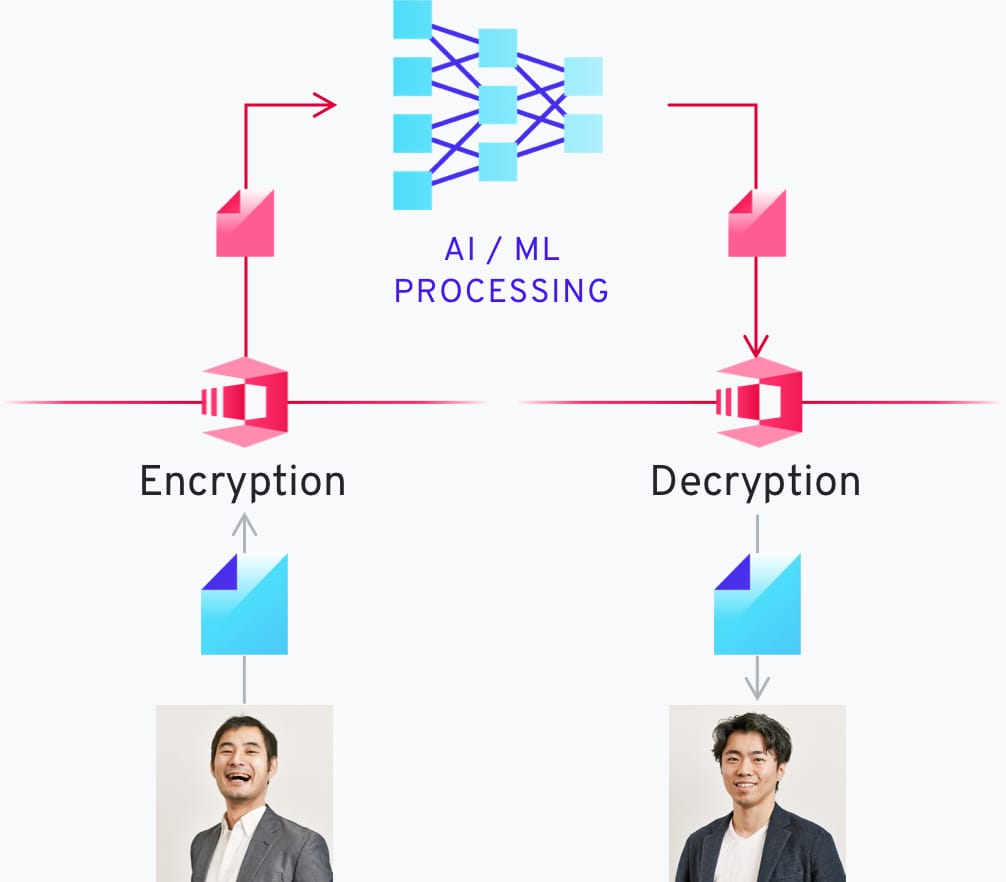

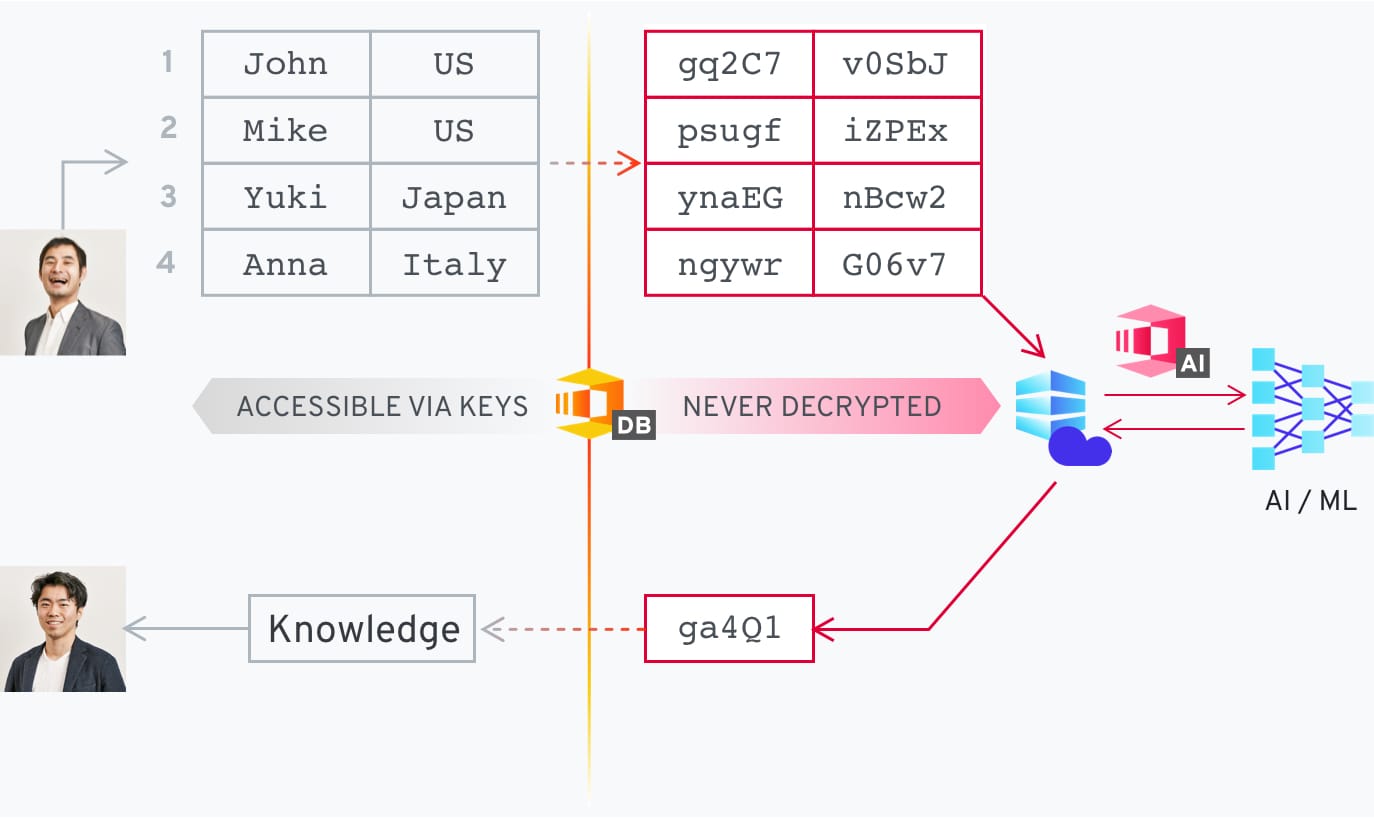

Create AI Models that can process encrypted data

With our technology you can process data without decrypting it first. This allows you to run your AI models and make predictions without having to decrypt the source information.

Enable AI operation in adverse conditions

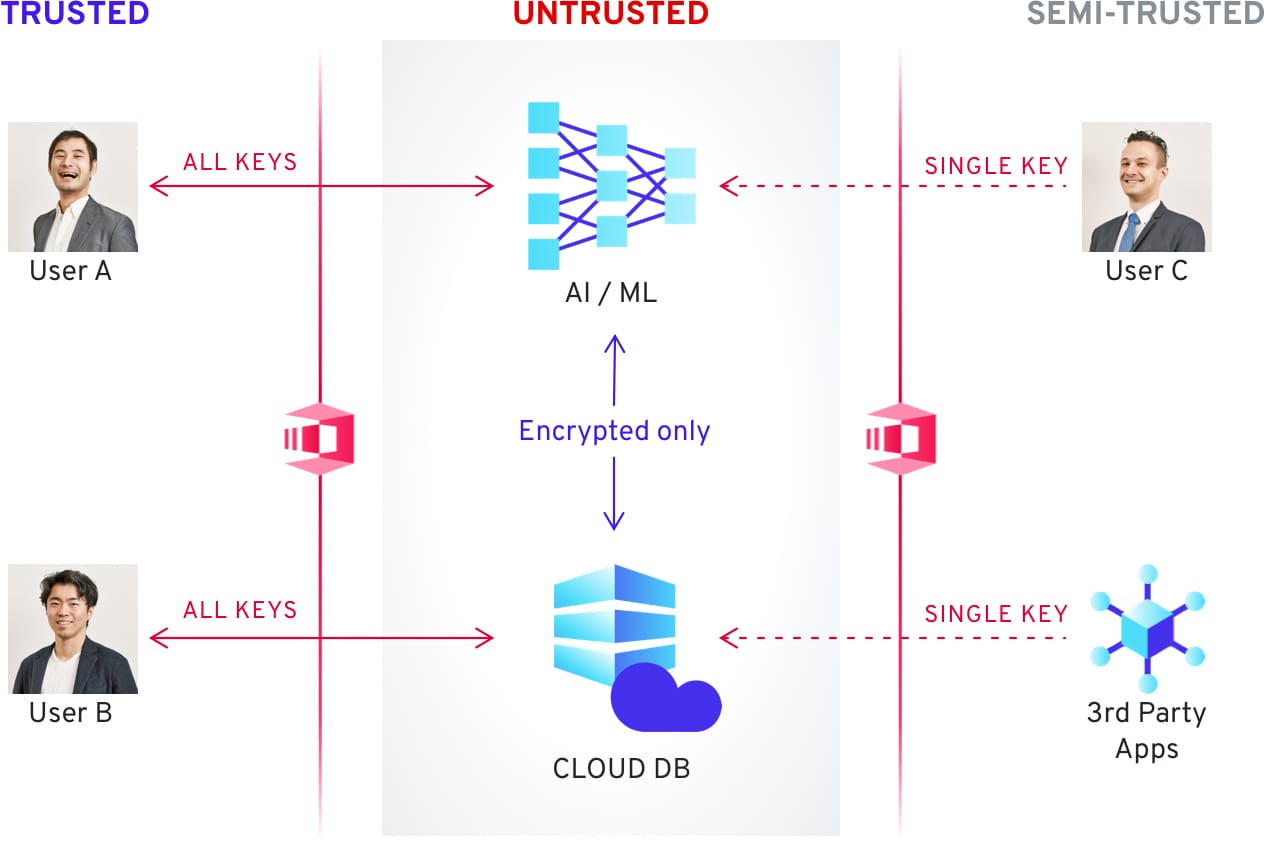

Your data is always safe while it is being processed since it is never decrypted, and the keys are not stored together with the AI models. From the input to the output the processing pipeline is consistently encrypted, decreasing operational risk and lowering monitoring requirements. We support autonomous machine learning operations with security by design.

Empower your business with machine learning for confidential data

Utilize your data for machine learning in the cloud without your cloud provider, or any attacker, having the ability to decrypt your data. Your information always stays yours.

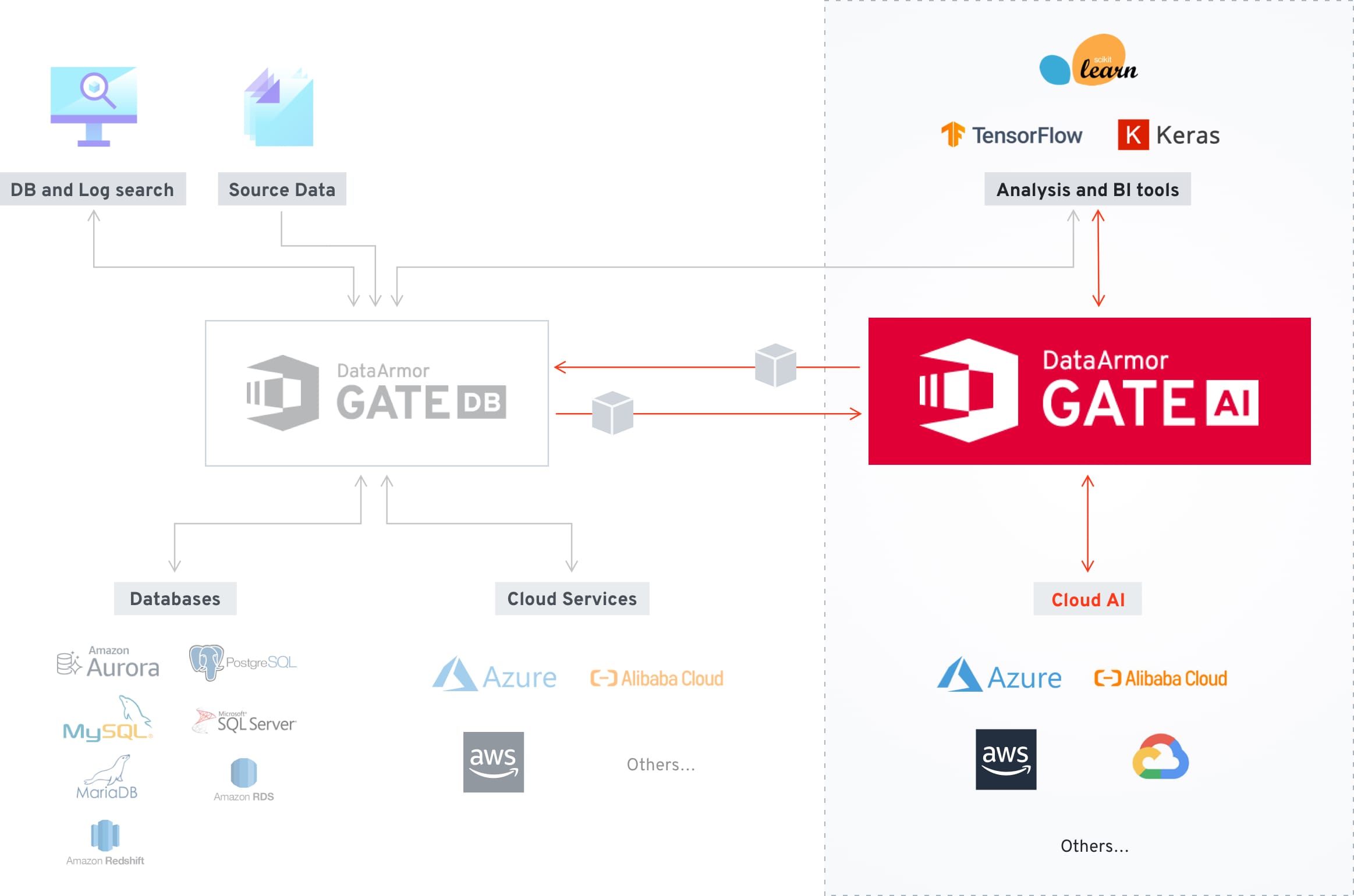

Widely Integrated

Eaglys’ DataArmor Gate AI can be easlily integrated with industry standard services.

DataArmor Gate AI allows for encryption of AI models as well as utilization of AI models with encrypted data, allowing your AI and ML models to take advantage of secure computing.

We are currently implementing this functionality on a project basis, and developing a drop in solution for future implementations.

Case studies

Discover the different scenarios in which EAGLYS’ DataArmor solutions can revolutionize industries and businesses that work with data.

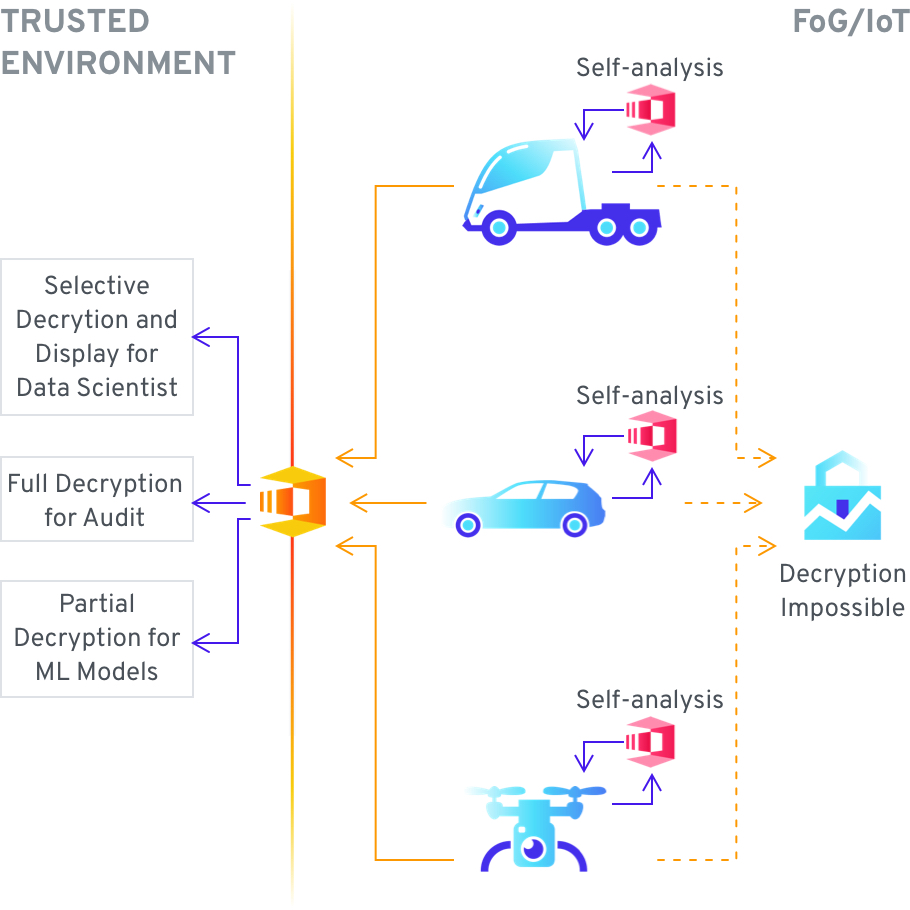

Secure computing can protect the data stored on vehicles which is aggregated from the onboard sensors – to enable such functionality as predicting necessary maintenance intervals or inferring the operational capabilities of the vehicle. This data needs to be protected as oftentimes it contains location data, and with sufficient resolution it can be used to infer the behavior of the owner, approximation of who is traveling in the vehicle, and the general scope of activities. As the data is necessarily stored on the vehicle itself, if it is not encrypted it can be easily accessed during a visit to the mechanic, or even maliciously by anyone that can gain physical access to said vehicle. With the use of secure computing the data can be encrypted, and live on the vehicle only in that format. The vehicle ML models can still operate over the encrypted data but the data cannot be decrypted while it is in use. The data can also be uploaded to a central location in the cloud or on company servers, and processed as an aggregate of a vehicle fleet, decrypted as needed for further analysis or simply kept for compliance reasons.

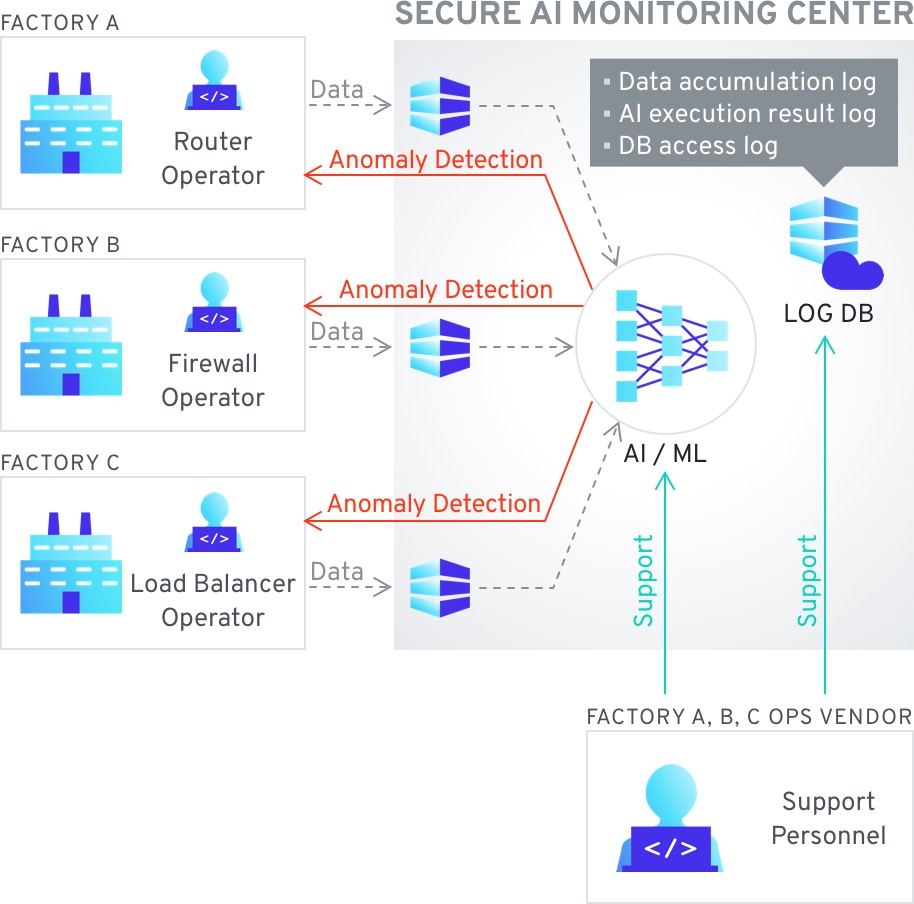

This use case concerns manufacturing statistical data, sourced from automation systems or IoT systems within the factory, providing information on performance, production output, logistics, etc. This data might need to be useable within the factory For any system that has to analyze and adjust operations autonomously. The specific drivers of this use case are security of the data in cases where the factory may not be in a completely Secure area, or the staff at the factory must not have access to detailed information – while the automated system needs the ability to calculate said data. One example of problems caused by access by wrong parties is the potential to predict the performance of a company ahead of earnings reports based on factory performance data.

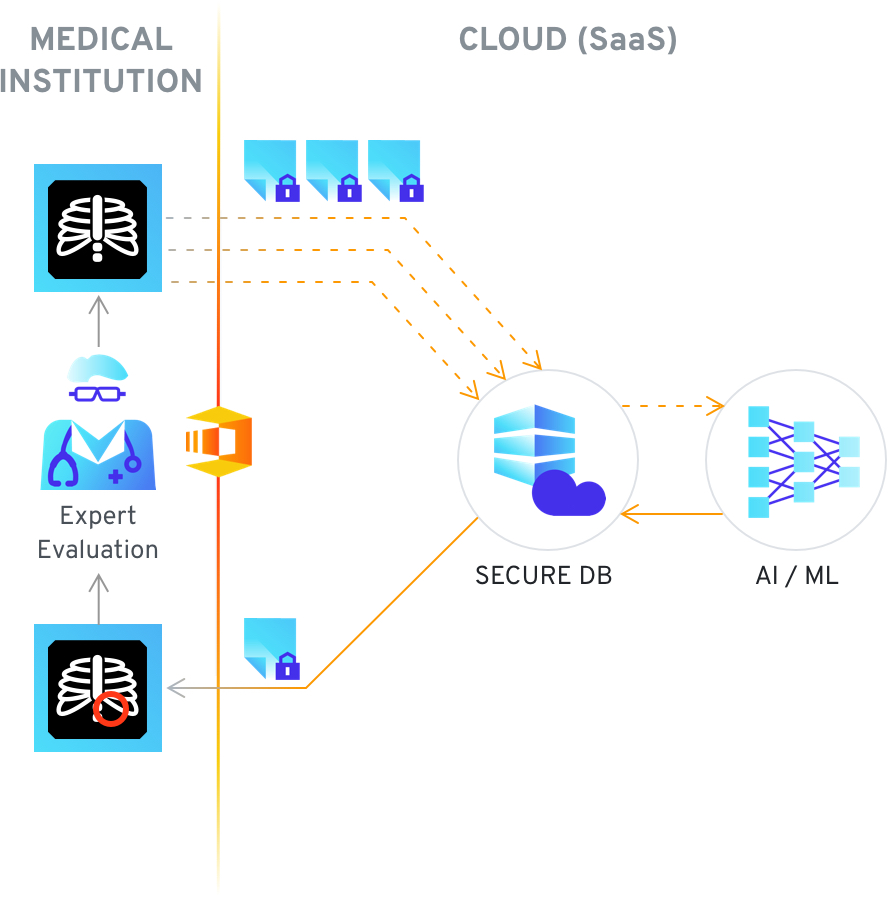

Secure computing can help in the training of AI to detect anomalies in medical image data – as generally large data sets are required, while a single medical provider can produce a limited volume of said data. This results in insufficient data being available to create AI models to detect anomalies, as well as some limitations on providing services where patient data would be processed. The problem can be mitigated by the use of secure computing, where patient data would be encrypted and processed only in an encrypted format. This allows for access to a far wider scope of patient records, as well as opens the opportunity to provide the same anomaly detection as a service. Encrypted patient data can be both analyzed for anomalies as well as used for the improvement of the AI model, whereas it can only be decrypted by the originator of the data.

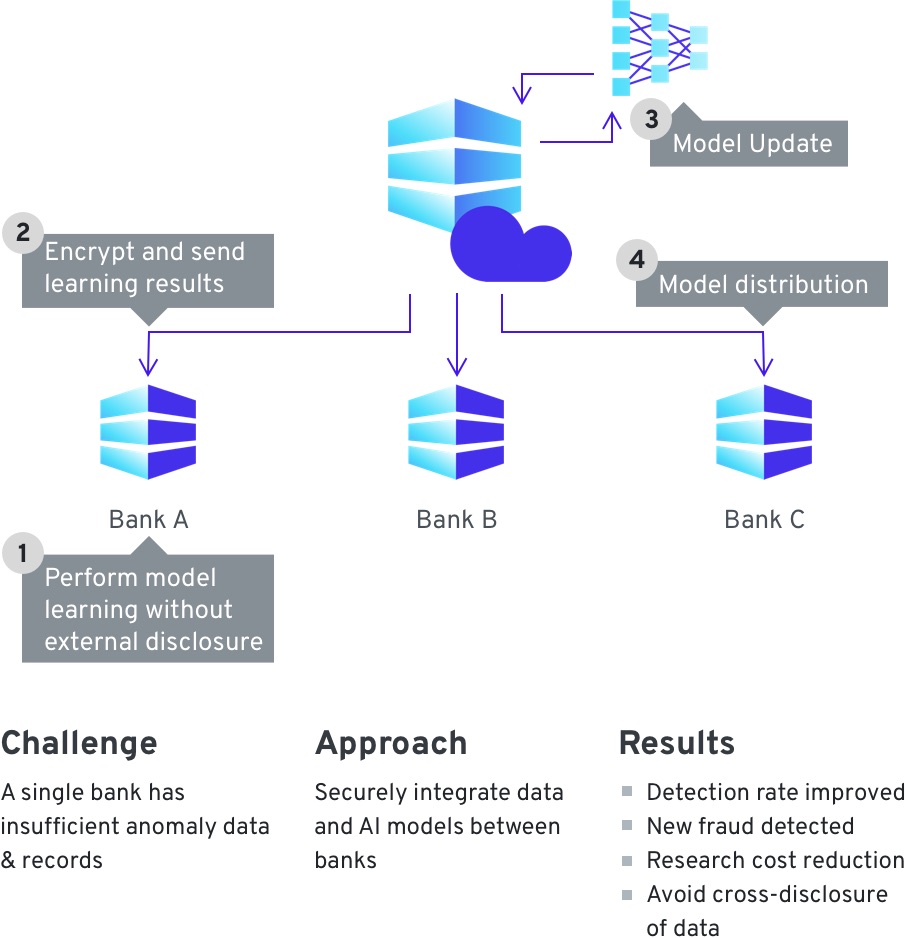

Enabling fraud detections through organizations exchanging information on fraudulent transactions. The data, if exchanged in an unencrypted format, has to be obfuscated and sanitized of all private data – rendering any models built on this basis far less accurate. By using secure computing solutions, entities can exchange their data as encrypted, and run analysis directly over the encrypted data – never accessing the customer information directly. This allows them to find anomalies and create accurate machine learning models that can detect such anomalies over their internal customer data – or request keys to decrypt specific portions of the data set as needed. This makes faster and more accurate detection and prevention of fraud possible.